Redshift

Integrate Sifflet with Redshift to access end-to-end lineage, monitor assets like Spectrum tables, enrich metadata, and gain insights for optimized data observability.

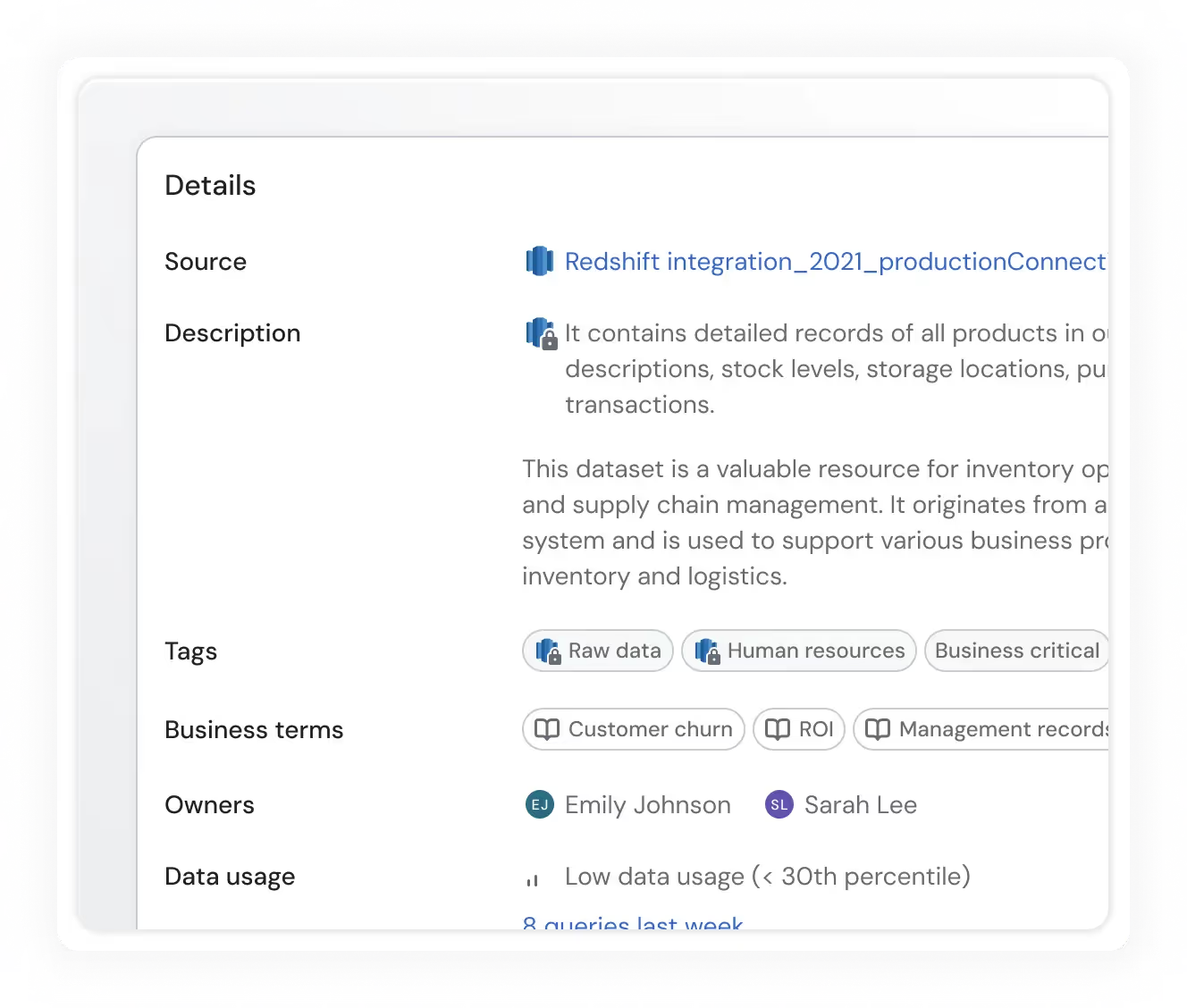

Exhaustive metadata

Sifflet leverages Redshift's internal metadata tables to retrieve information about your assets and enhance it with Sifflet-generated insights.

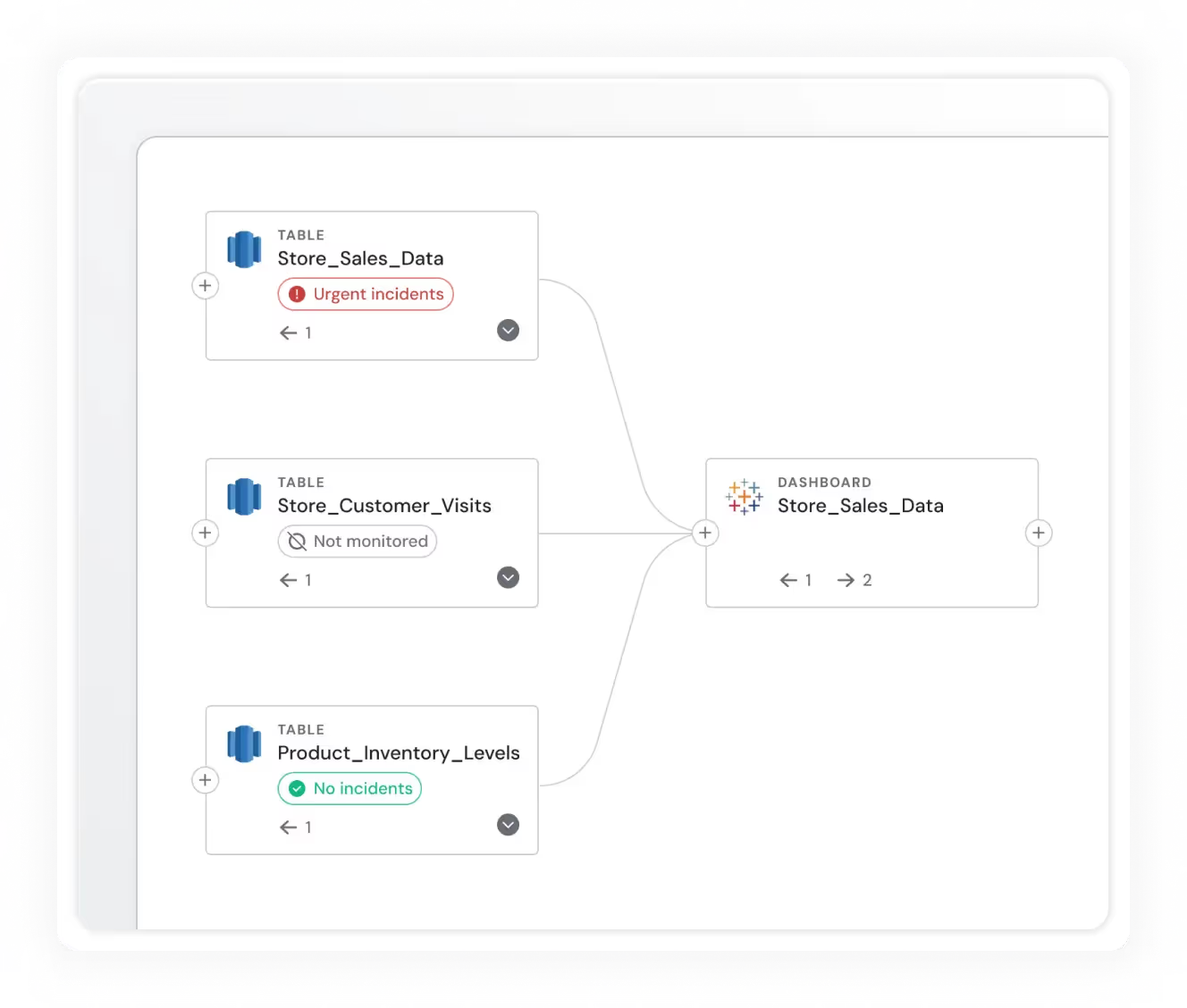

End-to-end lineage

Have a complete understanding of how data flows through your platform via end-to-end lineage for Redshift.

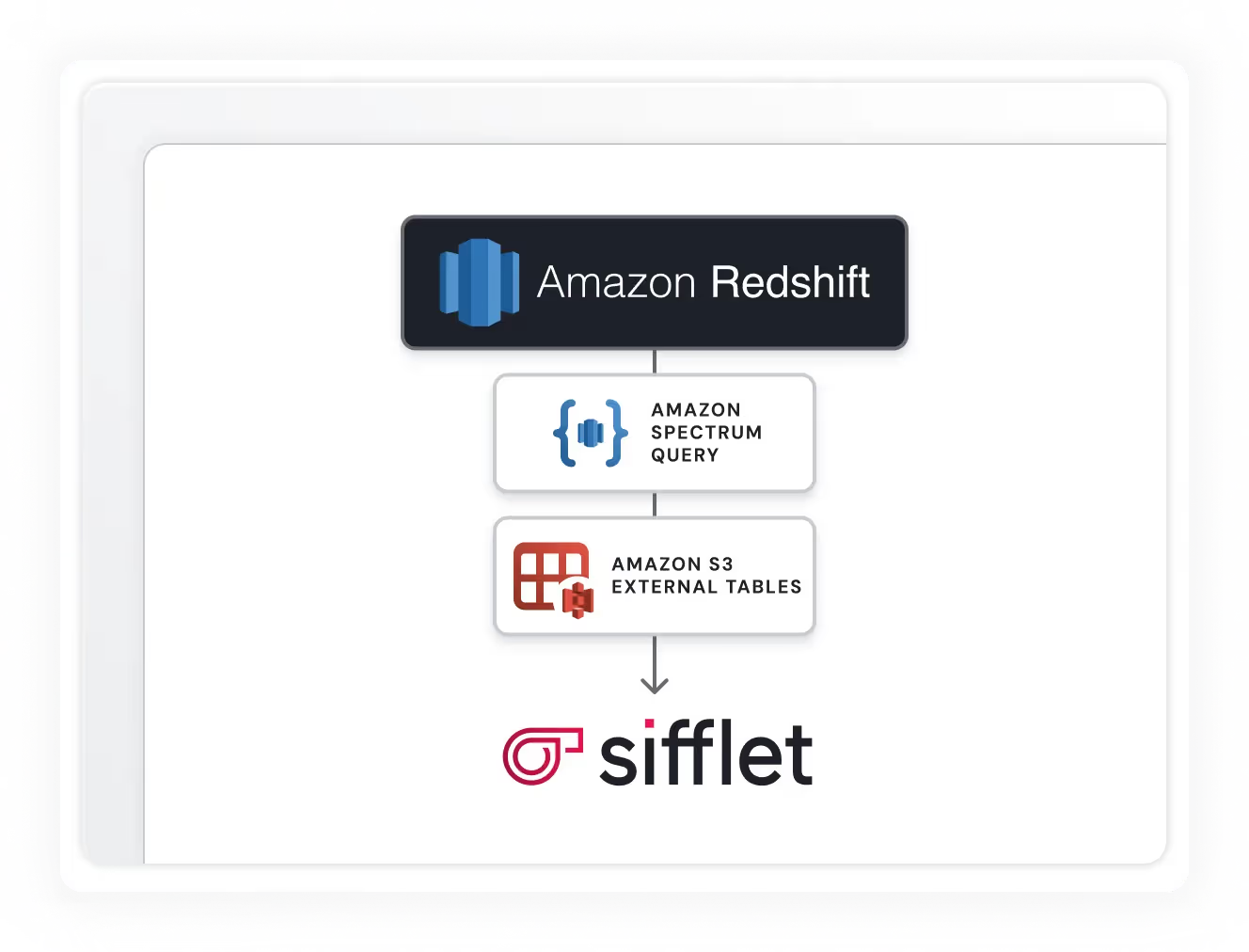

Redshift Spectrum support

Sifflet can monitor external tables via Redshift Spectrum, allowing you to ensure the quality of data stored in other systems like S3.

Still have a question in mind ?

Contact Us

Frequently asked questions

What is SQL Table Tracer and how does it help with data observability?

SQL Table Tracer (STT) is a lightweight library that extracts table-level lineage from SQL queries. It plays a key role in data observability by identifying upstream and downstream tables, making it easier to understand data dependencies and track changes across your data pipelines.

What role do Common Table Expressions (CTEs) play in query optimization?

CTEs help simplify complex queries by breaking them into manageable parts. This boosts readability and performance, making it easier to identify issues during root cause analysis and enhancing your data quality monitoring efforts.

How do organizations monitor the success of their data governance programs?

Successful data governance is measured through KPIs that tie directly to business outcomes. This includes metrics like how quickly teams can find data, how often data quality issues are caught before reaching production, and how well teams follow access protocols. Observability tools help track these indicators by providing real-time metrics and alerting on governance-related issues.

How does Sifflet support real-time metrics and alerting within a data platform?

Sifflet collects and monitors real-time metrics like data freshness, schema changes, and volume anomalies. With dynamic thresholding and real-time alerts via Slack or email, teams can respond quickly and keep their analytics platform running smoothly.

Why is data lineage a pillar of Full Data Stack Observability?

At Sifflet, we consider data lineage a core part of Full Data Stack Observability because it connects data quality monitoring with data discovery. By mapping data dependencies, teams can detect anomalies faster, perform accurate root cause analysis, and maintain trust in their data pipelines.

What’s a real-world example of Dailymotion using real-time metrics to drive business value?

One standout example is their ad inventory forecasting tool. By embedding real-time metrics into internal tools, sales teams can plan campaigns more precisely and avoid last-minute scrambles. It’s a great case of using data to improve both accuracy and efficiency.

How can data observability support better hiring decisions for data teams?

When you prioritize data observability, you're not just investing in tools, you're building a culture of transparency and accountability. This helps attract top-tier Data Engineers and Analysts who value high-quality pipelines and proactive monitoring. Embedding observability into your workflows also empowers your team with root cause analysis and pipeline health dashboards, helping them work more efficiently and effectively.

How does Sentinel help reduce alert fatigue in modern data environments?

Sentinel intelligently analyzes metadata like data lineage and schema changes to recommend what really needs monitoring. By focusing on high-impact areas, it cuts down on noise and helps teams manage alert fatigue while optimizing monitoring costs.

-p-500.png)