Shared Understanding. Ultimate Confidence. At Scale.

When everyone knows your data is systematically validated for quality, understands where it comes from and how it's transformed, and is aligned on freshness and SLAs, what’s not to trust?

Always Fresh. Always Validated.

No more explaining data discrepancies to the C-suite. Thanks to automatic and systematic validation, Sifflet ensures your data is always fresh and meets your quality requirements. Stakeholders know when data might be stale or interrupted, so they can make decisions with timely, accurate data.

- Automatically detect schema changes, null values, duplicates, or unexpected patterns that could comprise analysis.

- Set and monitor service-level agreements (SLAs) for critical data assets.

- Track when data was last updated and whether it meets freshness requirements

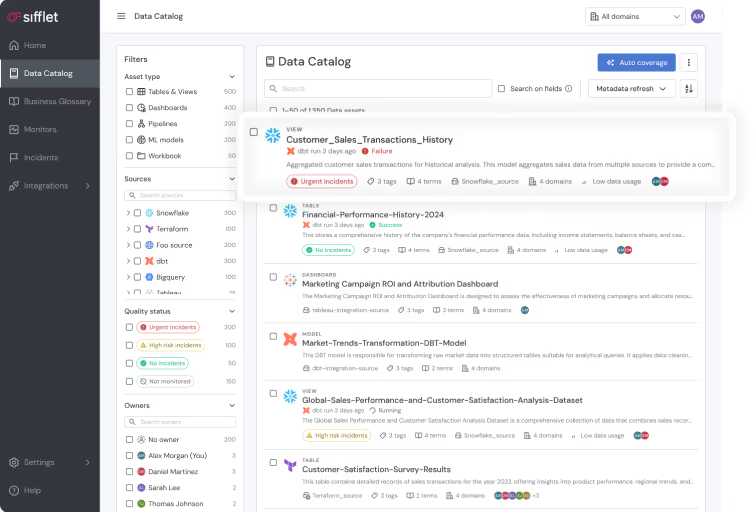

Understand Your Data, Inside and Out

Give data analysts and business users ultimate clarity. Sifflet helps teams understand their data across its whole lifecycle, and gives full context like business definitions, known limitations, and update frequencies, so everyone works from the same assumptions.

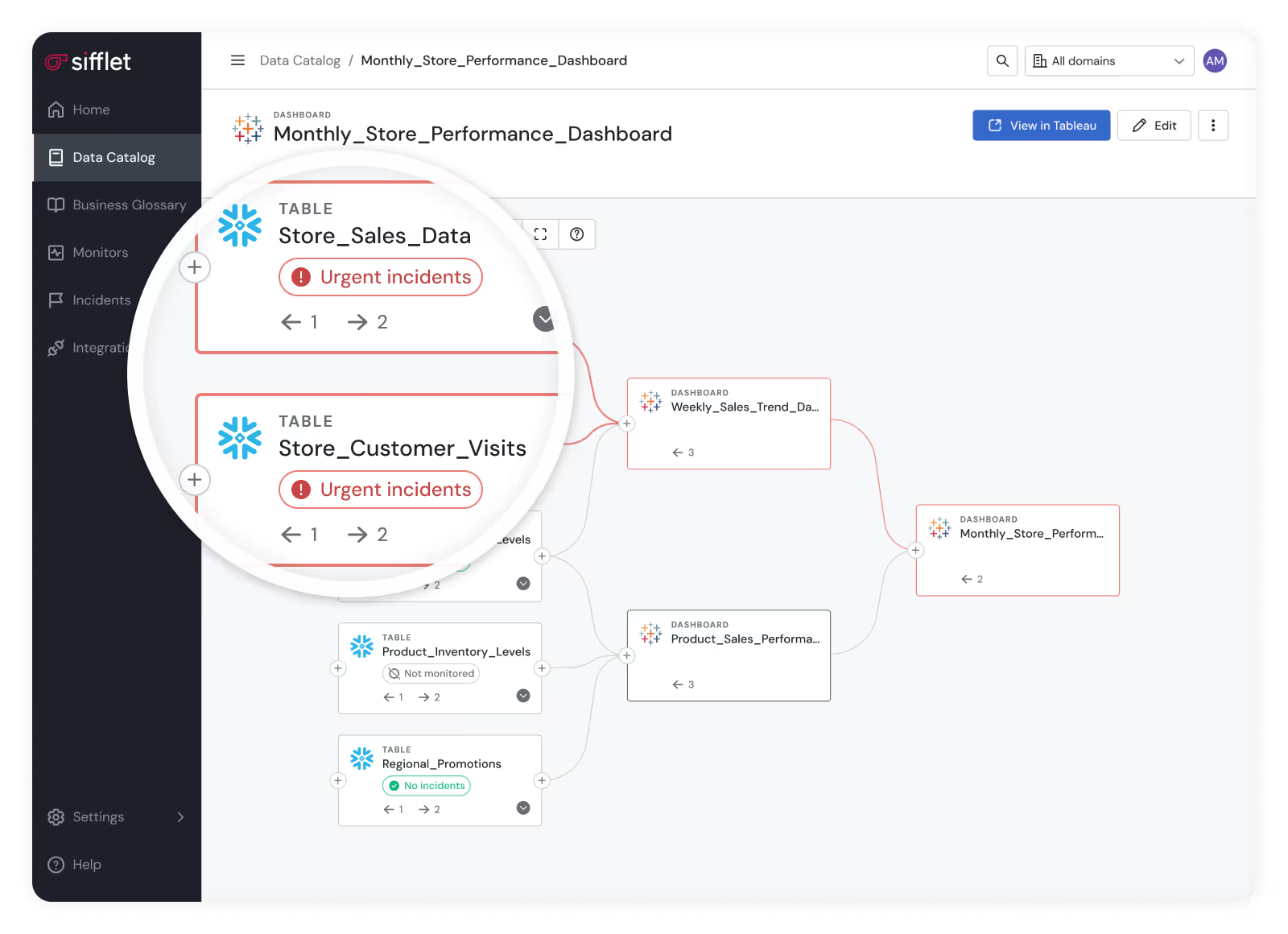

- Create transparency by helping users understand data pipelines, so they always know where data comes from and how it’s transformed.

- Develop shared understanding in data that prevents misinterpretation and builds confidence in analytics outputs.

- Quickly assess which downstream reports and dashboards are affected

Still have a question in mind ?

Contact Us

Frequently asked questions

How does Sifflet support collaboration across data teams?

Sifflet promotes un-siloed data quality by offering a unified platform where data engineers, analysts, and business users can collaborate. Features like pipeline health dashboards, data lineage tracking, and automated incident reports help teams stay aligned and respond quickly to issues.

Can data lineage help with regulatory compliance such as GDPR?

Absolutely. Data lineage supports data governance by mapping data flows and access rights, which is essential for compliance with regulations like GDPR. Features like automated PII propagation help teams monitor sensitive data and enforce security observability best practices.

How does Sifflet help with data freshness monitoring?

At Sifflet, we offer a powerful Freshness Monitor that tracks when your data arrives and alerts you if it's missing or delayed. Whether you're working with batch or streaming pipelines, our observability platform makes it easy to stay on top of data freshness and ensure your analytics stay accurate and timely.

What exactly is data quality, and why should teams care about it?

Data quality refers to how accurate, complete, consistent, and timely your data is. It's essential because poor data quality can lead to unreliable analytics, missed business opportunities, and even financial losses. Investing in data quality monitoring helps teams regain trust in their data and make confident, data-driven decisions.

What makes Sifflet’s Data Catalog different from built-in catalogs like Snowsight or Unity Catalog?

Unlike tool-specific catalogs, Sifflet serves as a 'Catalog of Catalogs.' It brings together metadata from across your entire data ecosystem, providing a single source of truth for data lineage tracking, asset discovery, and SLA compliance.

What role does data observability play in preventing freshness incidents?

Data observability gives you the visibility to detect freshness problems before they impact the business. By combining metrics like data age, expected vs. actual arrival time, and pipeline health dashboards, observability tools help teams catch delays early, trace where things broke down, and maintain trust in real-time metrics.

Can agentic observability help reduce alert fatigue for data teams?

Absolutely. One of the biggest advantages of agentic observability is alert fatigue reduction. Instead of flooding teams with scattered alerts, agents like Sage consolidate related issues into a single, coherent narrative. This focused approach allows teams to prioritize what matters most and respond faster, improving both efficiency and data observability.

What role did data observability play in improving Meero's data reliability?

Data observability was key to Meero's success in maintaining reliable data pipelines. By using Sifflet’s observability platform, they could monitor data freshness, schema changes, and volume anomalies, ensuring their data remained trustworthy and accurate for business decision-making.

-p-500.png)